Unlocking Development Results Data

Over the past year, our Results Data Initiative (RDI) has explored in depth two important links in the development data “results chain”: outputs (what development organizations implement, track, and monitor) and outcomes (the conditions of a community or area of influence). While development organizations and governments gather reams of output and outcome information, it’s been nearly impossible to compare this data across organizations – until now.

Currently in beta, the portal applies a standardized methodology (blog post forthcoming) for aggregating results information – with the goal of facilitating learning for better allocation decisions and program effectiveness. For example: when UNICEF plans to distribute textbooks to children in eastern Tanzania, it should be able to easily access data on what other donors have achieved, to avoid pitfalls and iterate on what works.

In particular, the RDI Data Visualization Portal seeks to respond to three big-picture questions:

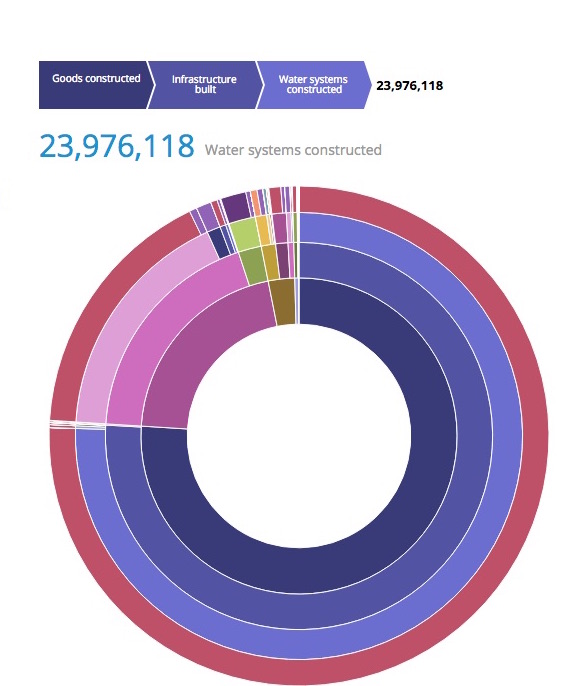

1. How can output data be aggregated and compared across organizations?

The portal’s first chart shows our “crosswalk” methodology in action. By clustering output data in this way, we have a preview of who is accomplishing what in health and agriculture sectors, across Tanzania, Ghana, and Sri Lanka.

Importantly, this chart only shows publicly-accessible data – but it lays the groundwork for a potentially powerful database of aggregated results, provided that development partners dedicate themselves to consistently publishing results information.

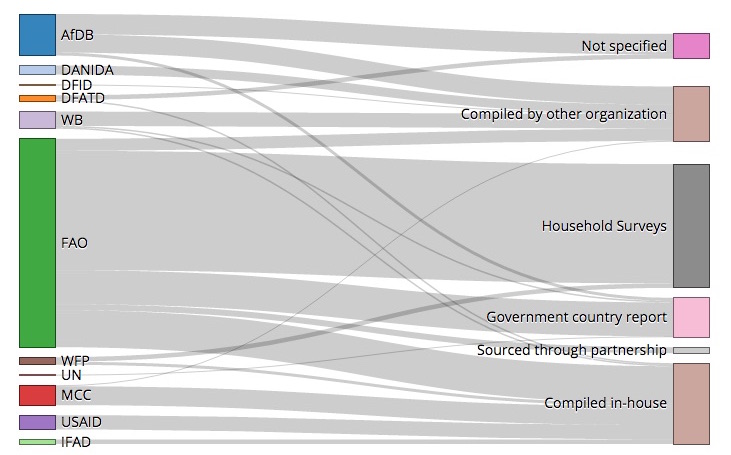

2. Where does outcome data come from?

Every development organization curates its own list of priority outcome indicators – but where does the metadata come from? The portal’s second chart “maps” development organizations’ indicators back to their source.

Initially, our goal was to “crosswalk” indicators from different agencies. However, further research on results data suggests it would be more productive to identify underlying data sources, since coordinating efforts to improve the collection of indicator data – by increasing the frequency or geographic detail of Demographic and Health Surveys, for example – may be more efficient in the long run than coordinating indicators themselves.

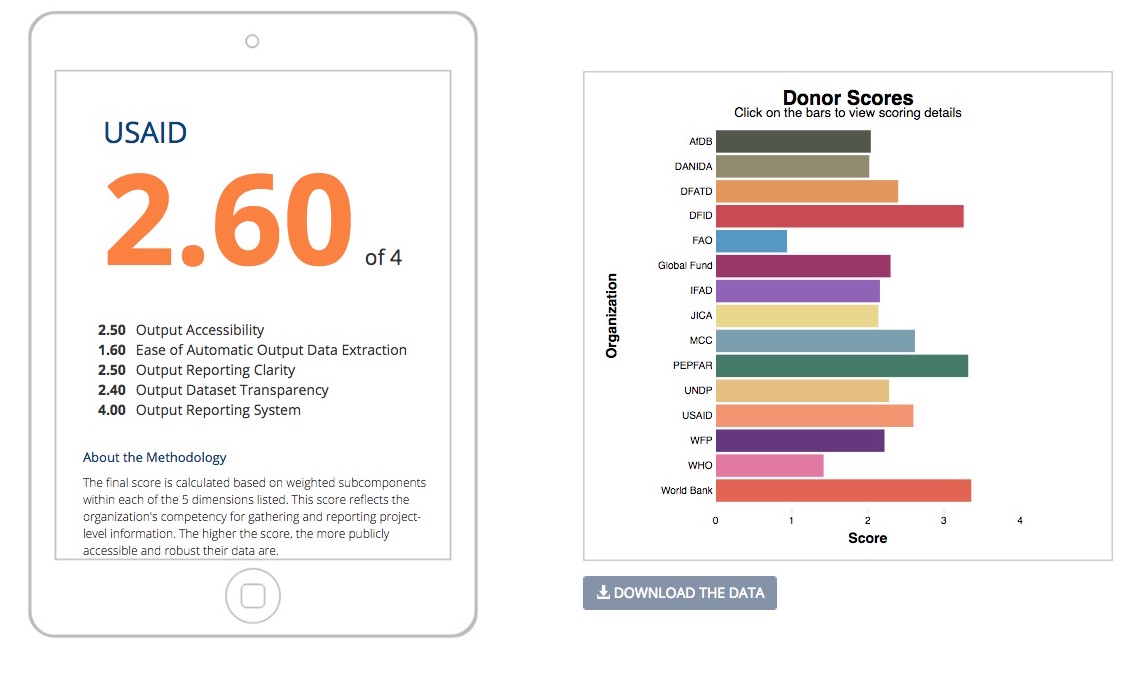

3. How can organizations report (monitoring) results more effectively?

Throughout the past year, we have noted that the accuracy and usefulness of our portal’s crosswalking is dependent on the amount and quality of information provided by donors. To this end, we’ve created a Results Reporting Scorecard that outlines how well organizations gather and publish monitoring data.

The idea here is not to “name and shame” organizations, but instead to provide recommendations to aid in open data priorities. The scorecard outlines the key elements that development partners should have in place, such as standard M&E policies and online project data portals, in order to provide the type of data that makes cross-donor aggregations possible and useful.

We hope readers will explore, find useful insights, and provide feedback on the data; stay tuned for more information on our data crosswalking methodology and country-level findings.

Share This Post

Related from our library

DG’s Open Contracting Portal Designated as a Digital Public Good

Digital Public Goods Alliance designated DG’s Open Contracting Portal as a digital public good in September 2022. The Portal provides procurement analytics that can be used to improve procurement efficiency and, in turn, reduce corruption and increase impact.

To Enable W-SMEs to Thrive in Côte d’Ivoire We Start by Listening to their Data and Digital Needs

This blog is co-written by Development Gateway’s Aminata Camara, Senior Consultant; Kathryn Alexander, Senior Program Advisor; and MCC‘s Agnieszka Rawa, Managing Director of Data Collaboratives for Local Impact (DCLI). On June 28th, 2021, MCC, USAID, Microsoft, Thinkroom, and Development Gateway will be co-hosting a workshop to share, validate, inform, and build on recent research on

-1000x750.png)

The Results Data Initiative has Ended, but We’re still Learning from It

If an organization with an existing culture of learning and adaptation gets lucky, and an innovative funding opportunity appears, the result can be a perfect storm for changing everything. The Results Data Initiative was that perfect storm for DG. RDI confirmed that simply building technology and supplying data is not enough to ensure data is actually used. It also allowed us to test our assumptions and develop new solutions, methodologies & approaches to more effectively implement our work.