Opening Remarks: Avoiding Data Graveyards

These remarks were given at the DC launch event for the Avoiding Data Graveyards report on April 24th. Watch the full event online.

It is clear from this turnout that the issue of putting data and evidence to use — and understanding what can get in the way — is one that broadly resonates.

As one of the partners in this study — undertaken through the AidData Center for Development Policy, with support from the USAID’s Global Development Lab — I’d like to share why this research has been so meaningful to us at Development Gateway.

As many of you know, information management was baked into our DNA from the beginning. As a technology partner, we help governments progress along the data value chain. In the beginning, we worked to help governments manage financial flows (inputs) — like who’s funding maternal health, and where in a country. This evolved to tracking outputs, like the training of community health workers, and more recently to exploring outcomes, like are maternal mortality rates declining? Over time, we sought new ways to help decision-makers interact with information visually through dashboards and maps. Later, thanks to advances in data standards, like IATI and open contracting, we’ve focused on interoperability, and helping different systems talk to one another.

These advances, while all critical building blocks, have given rise to a new set of questions:

-

What happens to a data point once it’s collected?

-

When does data become insight, and when does it never see the light of day?

-

How and when do data & evidence have influence?

Why is this so challenging to untangle? Aren’t we just talking about cold, hard data points? Initially, yes, but ultimately we’re talking about institutions, politics, and processes to hopefully result in people using data and information to do something different. That’s behavior change — individual and institutional — and that’s where things get messy.

So here’s where we’ve landed so far on this journey: to understand how technology and data can be useful and actionable, we need to devote the same intensity to understanding the humans and the institutions that use these tools, as we do developing technology or creating data in the first place. And that is precisely what this study does — “action research”. These country studies have yielded actionable insights for our work and that of our government partners.

So, what’s next? Together with the AidData Center for Development Policy, and with continued support from USAID, we’ve taken the findings from the study, used them to test out new interventions, and we’ll be evaluating their impact—and of course, sharing what we learn.

The last point I want to make is about the value, and the necessity, of diverse partnerships in tackling complex challenges like this one. Understanding the human and institutional motivations, norms, cultures, incentives (or lack thereof) around data is a multi-disciplinary undertaking. Partnerships like ours with AidData, UT, BYU, and Esri, that which draw on our diverse strengths and experiences, are indicative of the kinds of approaches that we’ll need more of in the future if we want to chip away at this thorny issue of data uptake.

I hope the discussion today will prompt your own reflections, and help to inform our collective thinking around the kinds of approaches that can best position data and evidence to matter.

Share This Post

Related from our library

AMP Through the Ages

15 years ago, AMP development was led by and co-designed with multiple partner country governments and international organizations. From a single implementation, AMP grew into 25 implementations globally. Through this growth, DG has learned crucial lessons about building systems that support the use of data for decision-making.

Working with Partners to Find Solutions to Integration Challenges

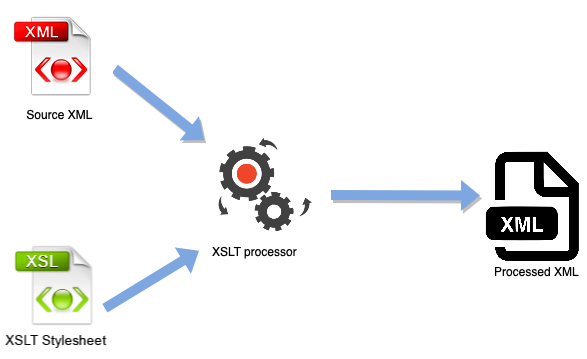

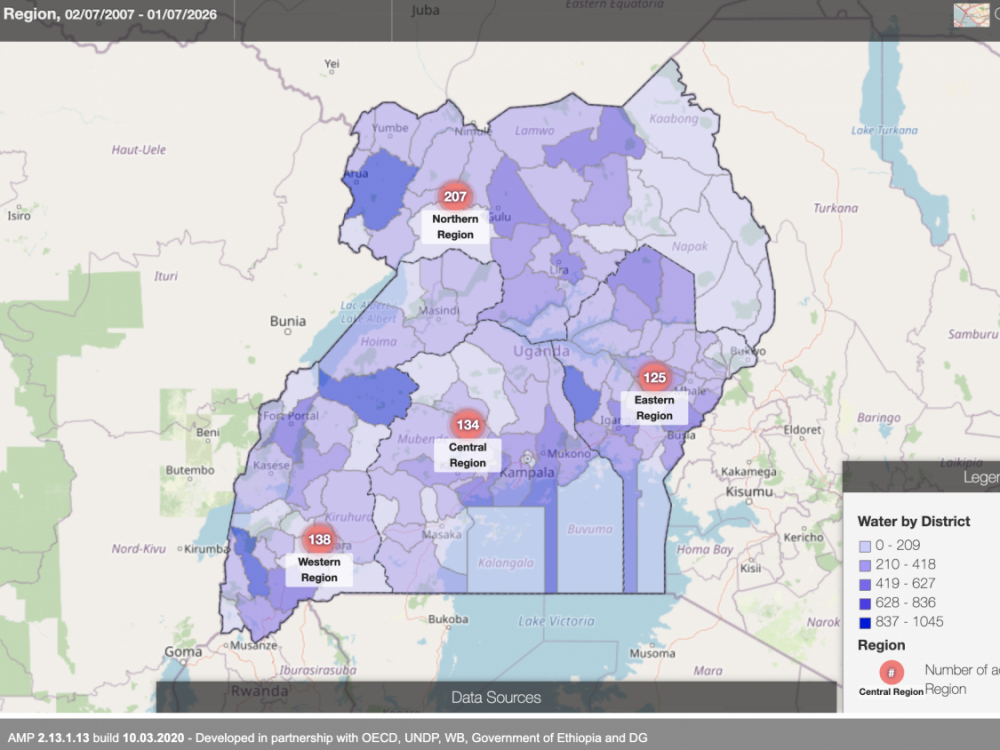

This past March, DG launched an AMP module that helps the Ministry of Finance, Planning, and Economic Development in Uganda track aid disbursements in their existing Program Budgeting System. This blog examines DG’s technical process and the specific solutions used to overcome AMP-Program Budgeting System (PBS) integration challenges.

Creating Integrations between AMP & Existing Country Systems

Since 2017, Development Gateway has been working with the Government of Uganda to build and update their Aid Management Platform (AMP). Uganda’s AMP houses over 1,300 on-budget projects directly from its national data management system. This year, DG built a module that interfaces with Uganda’s Program Budgeting System (PBS) to ensure that data is effectively transmitted between the two systems.