Big Data in Development: A Cautionary Note

Imagine a young woman in her mid-20s in Nairobi, Kenya, named Rehema. Twenty-four hours a day, seven days a week, she is surveilled. When she turns off her morning alarm, an app logs how many hours she slept. As Rehema jumps onboard a bus to go to work, her phone tracks her location. When she meets friends after work, she swipes her credit card to buy dinner – and the transaction is automatically tracked, logged, and categorized. Everything from Rehema’s online browsing habits to the stores she frequents and the music she likes are tracked and categorized by companies and third-party sources too many to count, many looking for ways to more effectively sell Rehema services and products.

Understandably, Rehema could be very upset if she knew the extent to which she was being surveilled. But a story like Rehema’s is already quite common. Increasingly, in using digital tools – from Nairobi to New York, London to Lusaka – we are handing over our data to faceless individuals, corporations, and agencies. We’re increasingly asking, how is that data being used? Who is using it? What are the principles that guide their use of that data?

We at Development Gateway follow and contribute to this conversation. Whether we’re improving healthcare delivery services, promoting government transparency, or developing any number of initiatives, we thoughtfully develop digital tools and provide services that not only avoid harm, but can more effectively deliver services to vulnerable populations.

Last month, we joined several development practitioners to address this topic on a panel titled, “Big Data, Big Impact: Understanding the role of big data in health, aid and development” at the 2019 International Aid and Development Global Forum (AIDF) in Washington, DC.

Figure 1: AIDF Panel on Big Data in International Aid, DG’s Andrea Ulrich on far right.

We spoke about the challenges and opportunities of adopting new and emerging technologies within international development. We identified the importance of being cautiously optimistic in utilizing new, unconventional data sources to fill data gaps, while also being mindful of biased data and the potential to further cement inequality.

Local NGOs, government policy makers, and practitioners alike struggle to design, develop, and monitor programs when we face a lack of high-quality data. Despite initiatives like the Global Partnership for Sustainable Development Data, higher data quality is still needed to make more effective decisions to improve service delivery.

Big data may be one approach to fill that data gap. Big data refers to a large amount of data moving with increasing velocity, variety, and volume. This kind of information is often referred to as “data exhaust,” or passively collected data created as a result of individuals’ interactions with digital services and tools, much as what we saw with Rehema in our example above.

So, how can the development community use big data practically and effectively – and not just as a buzzword? Below are five ideas to begin that conversation:

1. Investigate data biases.

It’s easy to make the risky assumption that “big data” and algorithms are unbiased. However, for example if we use mobile phone spending patterns as a proxy for poverty indicators, we will over-count young, urban men, and under-count women, the elderly, and other marginalized individuals. This leads to consequences that could worsen the “digital divide.” Further investment could double down to create even more data on young, urban men, with marginalized individuals fading further from view. Unless we account for this bias, we may fail to address key needs of the most vulnerable populations.

2. Design feedback loops.

Effective big data algorithms use checks and balances to verify that their algorithmic outputs are meaningful. They reference their outputs with other values considered to be gold standards. For example, FICO scores, which evaluate an individual’s credit worthiness, are considered well-designed in part because they are continuously validated by other financial institutions. Individuals can also log formal complaints if they feel that their FICO score was unfairly calculated. To ensure our own algorithms are valid, we can similarly invest in frequent data checks.

3. Build in responsible data practices.

Responsible data best practices should be baked into any new or existing data collection activities. Although many of us in the data community understand this principle, there’s much more work to be done. With new energy and investments funneling into unconventional data sources, we have an opportunity to adopt these practices. USAID, The Engine Room, and others have provided excellent resources on obtaining informed consent, cataloguing who benefits from collected data, and creating risk matrices and data sustainability plans. Because big data relies on aggregated passive data, we must consider privacy measures to protect individual information, such as data anonymization and fuzzing.

Figure 2: The Handbook of the Modern Development Specialist, a key resource produced by the Responsible Data Forum

4. Assess the institutional environment.

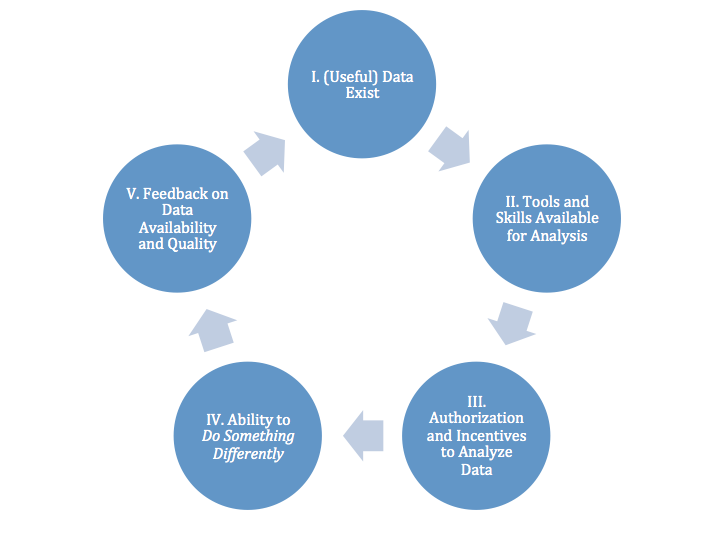

At DG, we’re constantly thinking about not just what kinds of data we’re using, but how, and to what end – we’re constantly considering the decision space that affect users and policy makers. To use data more effectively, we have to map how it is currently being used, what data is needed to make decisions, and who makes those decisions. In other words, we must understand the institutional factors within an organization that impact how data can be used effectively. Otherwise, new, unconventional data will be relegated to a data graveyard.

Figure 3: A series of questions related to decision space.

5. Accept that big data may not be the solution.

In some cases, using algorithms may do more harm than good, inadvertently hard-coding bias and cementing inequality. For example, when Amazon built a machine learning algorithm to assist in selecting top-tier job candidates, they found that the engine negatively scored female applicants for being women. The algorithm had “learned” by reviewing 10 years of Amazon’s hiring data, which was disproportionately representative of men. The researchers found that algorithms were being trained on already-biased datasets. Because of privacy and ethical issues, or a lack of unbiased data, big data is not always the solution.

By keeping these five principles in mind, big data can deliver big impacts in international development.

Used properly and carefully, we can access untapped data sources in a way that prioritizes the needs and rights of individuals, corrects for biases, and creates an environment where individuals like Rehema are able to rely upon their digital devices with greater peace of mind.

Share This Post

Related from our library

Developing Data Systems: Five Issues IREX and DG Explored at Festival de Datos

IREX and Development Gateway: An IREX Venture participated in Festival de Datos from November 7-9, 2023. In this blog, Philip Davidovich, Annie Kilroy, Josh Powell, and Tom Orrell explore five key issues discussed at Festival de Datos on advancing data systems and how IREX and DG are meeting these challenges.

Unlocking the potential of digital public infrastructure for climate data and agriculture: Malawi

DG’s DAS Program recently attended an event on creating a national digital public infrastructure (DPI) in Malawi in order to increase the impact of climate data to combat current and future agricultural issues caused by climate change. In this blog, we reflect on three insights on DPIs that were revealed during the event discussion.

What Does a Good Agriculture Data System Look Like? Reflections from 2023 Festival de Datos

DG's joint session at 2023 Festival de Datos posed the question: What does a “good” agriculture data system look like? In this blog post, we'll delve into the key principles that emerged from the discussion.